Motivation

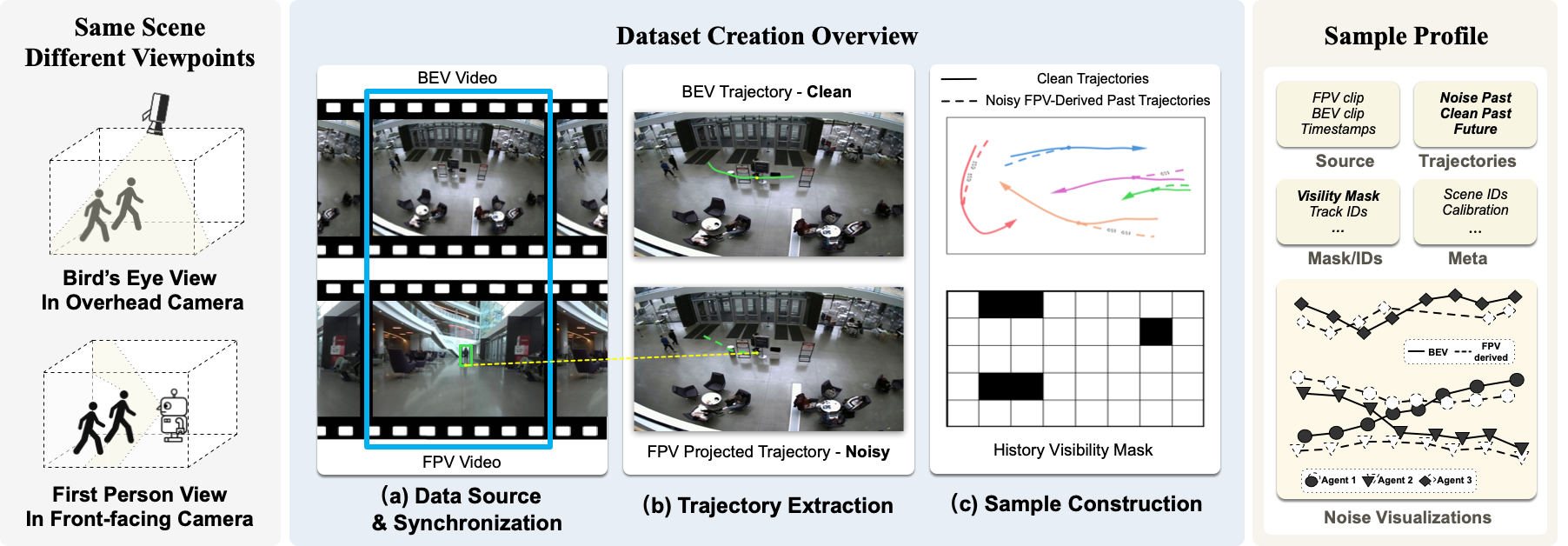

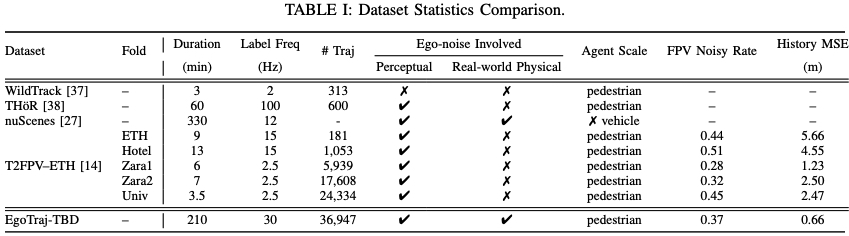

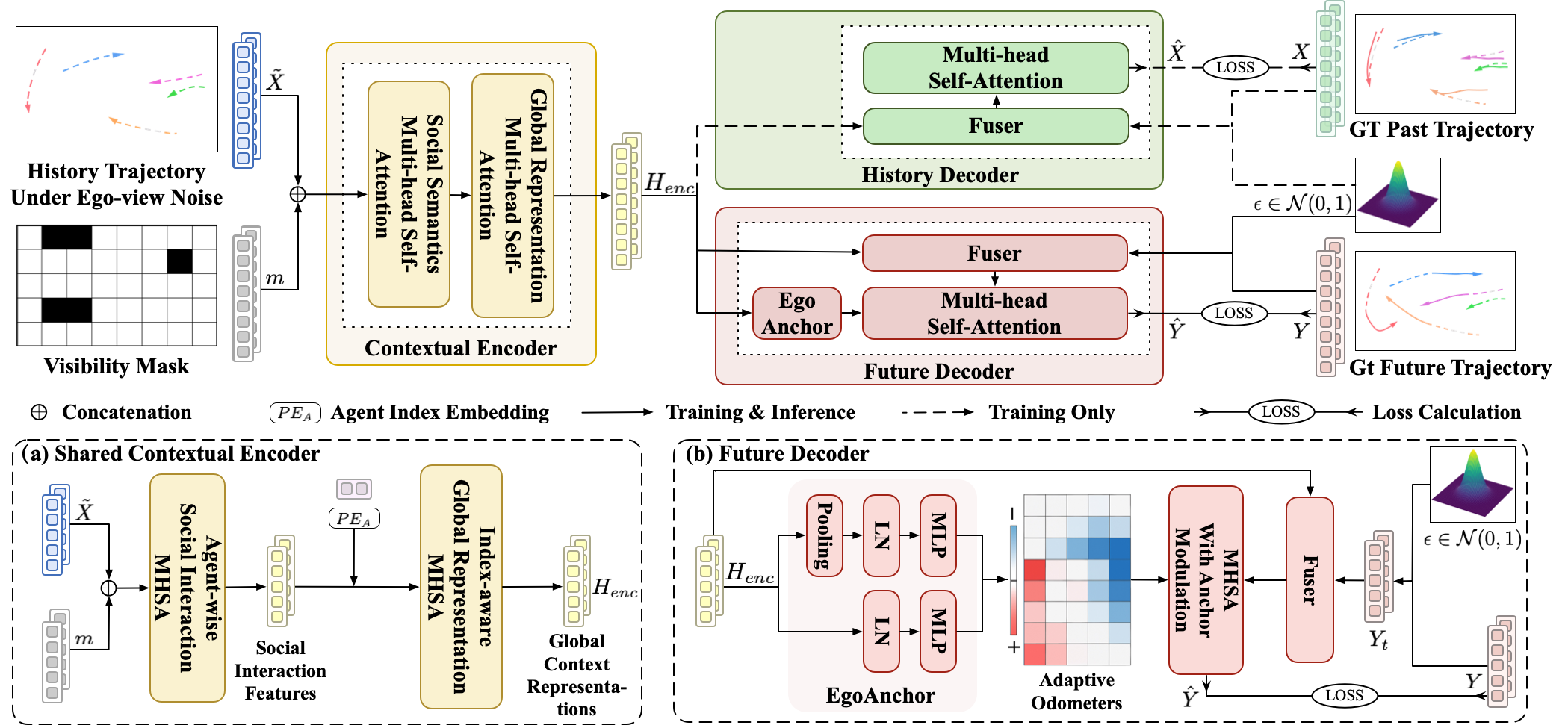

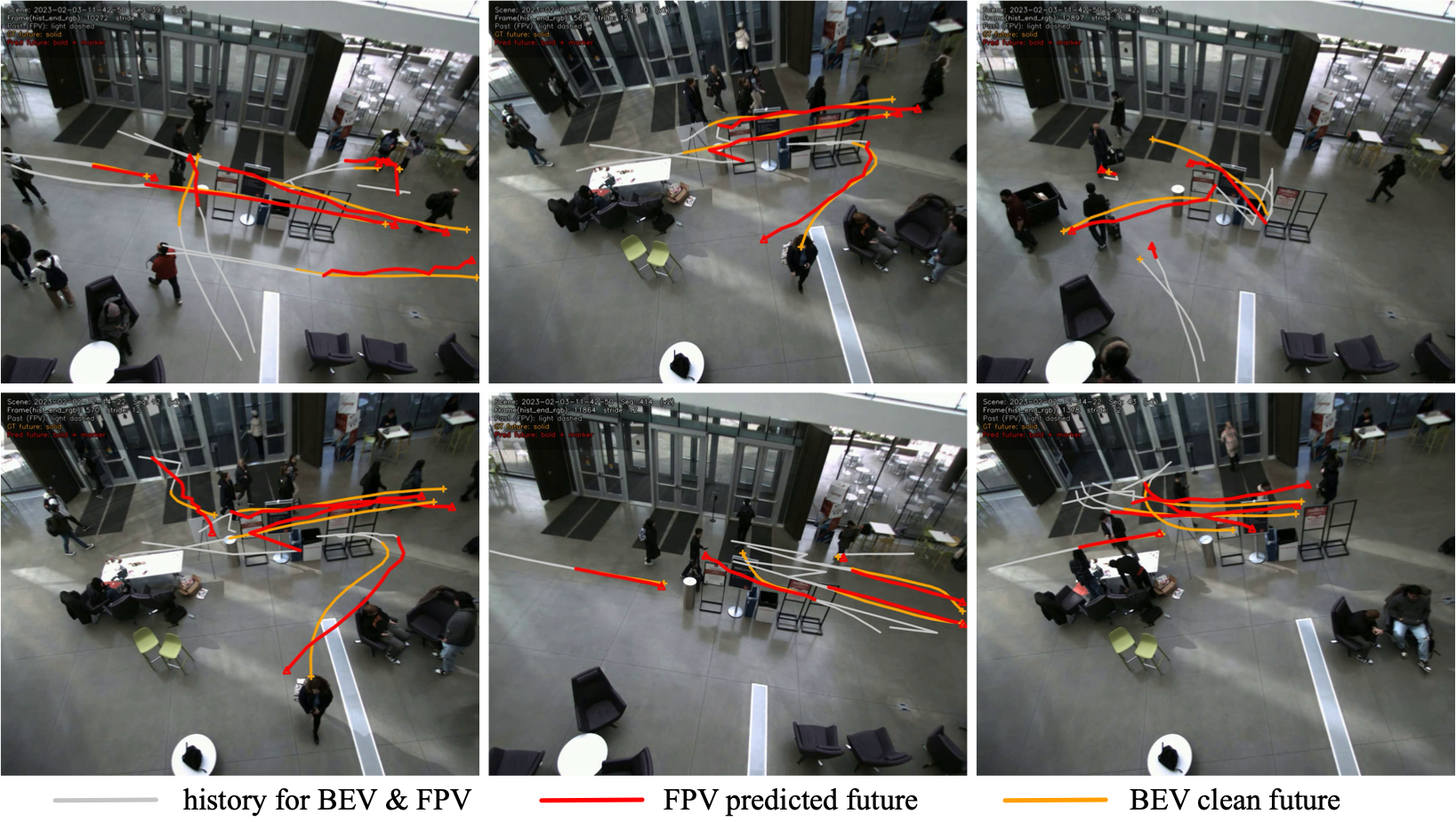

Most trajectory prediction methods are trained and evaluated under the assumption of clean, complete observations from a bird's-eye view (BEV). In real robot deployment, however, observations come from a first-person view (FPV) camera, where occlusions, ID switches, tracking drift, and perspective distortions are unavoidable. This gap between training assumptions and deployment reality severely limits model robustness.

■ Cyan: occlusion-induced gaps ·

■ Red: ID switches ·

■ Green: ego-centric perspective distortions.

Dashed: first-person view (FPV) derived history ·

Solid: bird's-eye view (BEV) derived trajectory.

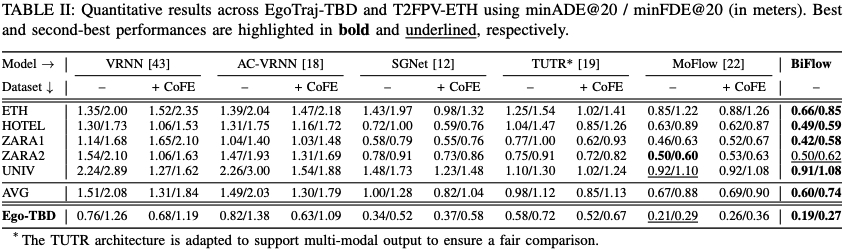

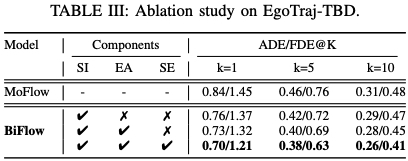

The radar chart below illustrates the performance gap of SOTA model MoFlow when evaluated under clean BEV histories versus noisy FPV histories across all ETH-UCY folds and the TBD dataset. FPV noise causes dramatic degradation.

MoFlow minADE@20 under BEV (clean) vs. FPV (noisy) settings. The large gap across all datasetsmotivates need for benchmarks explicitly modeling ego-view noise.

EgoTraj-Bench directly addresses this by providing real-world FPV noisy histories paired with clean BEV future ground truth, enabling fair evaluation and robust learning under deployment-level noise.